1.1.3.1 Production cycle

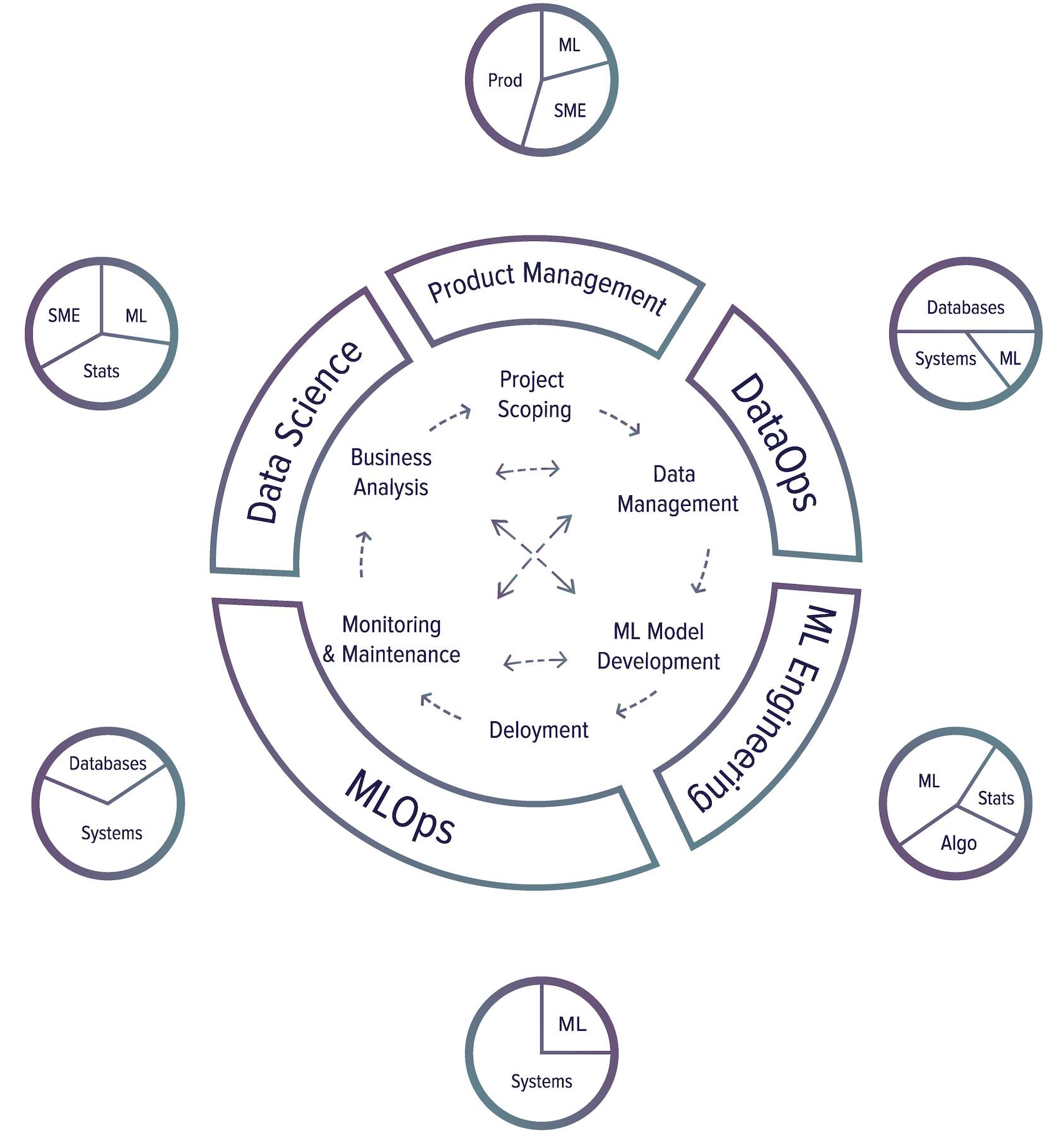

To understand different roles involving machine learning in production, let’s first explore different steps in a production cycle. There are six major steps in a production cycle.

⚠ On the main skills listed at each step ⚠

The main skills listed at each step below will upset many people, as any attempt to simplify a complex, nuanced topic into a few sentences would. This portion should only be used as a reference to get a sense of the skill sets needed for different ML-related jobs.

Project scoping

A project starts with scoping the project, laying out goals & objectives, constraints, and evaluation criteria. Stakeholders should be identified and involved. Resources should be estimated and allocated.

Main skills needed: product management, subject matter expertise to understand problems, some ML knowledge to know what ML can and can’t solve.

Data management

Data used and generated by ML systems can be large and diverse, which requires scalable infrastructure to process and access it fast and reliably. Data management covers data sources, data formats, data processing, data control, data storage, etc.

Main skills needed: databases/query engines to know how to store/retrieve/process data, systems engineering to implement distributed systems to process large amounts of data, minimal ML knowledge to optimize the organization data for ML access patterns would be helpful, but not required.

ML model development

From raw data, you need to create training datasets and possibly label them, then generate features, train models, optimize models, and evaluate them. This is the stage that requires the most ML knowledge and is most often covered in ML courses.

Main skills needed: This is the part of the process that requires the most amount of ML knowledge, statistics and probability to understand the data and evaluate models. Since feature engineering and model development require writing code, this part needs coding skills, especially in algorithms and data structures.

Deployment

After a model is developed, it needs to be made accessible to users.

Main skills needed: Bringing an ML model to users is largely an infrastructure problem: how to set up your infrastructures or help your customers set up their infrastructures to run your ML application. These applications are often data-, memory-, and compute-intensive. It might also require ML to compress ML models and optimize inference latency unless you can push these to the previous step of the process.

Monitoring and maintenance

Once in production, models need to be monitored for performance decay and maintained/updated to be adaptive to changing environments and changing requirements.

Main skills needed: Monitoring and maintenance is also an infrastructure problem that requires computer systems knowledge. Monitoring often requires generating and tracking a large amount of system-generated data (e.g. logs), and managing this data requires an understanding of the data pipeline.

Business analysis

Model performance needs to be evaluated against business goals and analyzed to generate business insights. These insights can then be used to eliminate unproductive projects or scope out new projects.

Main skills needed: This part of the process requires ML knowledge to interpret ML model’s outputs and behavior, in-depth statistics and probability knowledge to extract insights from data, as well as subject matter expertise to map these insights to the practical problems the ML models are supposed to solve.

Skill annotation

- Systems: system engineering e.g. to building distributed systems, container deployment.

- Databases: data management, storage, processing, databases, query engines. This is closely related to Systems since you might need to build distributed systems to process large amounts of data.

- ML: linear algebras, ML algorithms, etc.

- Algo: algorithmic coding

- Stats: probability, statistics

- SME: subjective matter expertise

- Prod: product management

The most successful approach to ML production I’ve seen in the industry is iterative and incremental development. It means that you can’t really be done with a step, move to the next, and never come back to it again. There’s a lot of back and forth among various steps.

Here is one common workflow that you might encounter when building an ML model to predict whether an ad should be shown when users enter a search query8.

8: Praying and crying not featured but present through the entire process.

- Choose a metric to optimize. For example, you might want to optimize for impressions -- the number of times an ad is shown.

- Collect data and obtain labels.

- Engineer features.

- Train models.

- During error analysis, you realize that errors are caused by wrong labels, so you relabel data.

- Train model again.

- During error analysis, you realize that your model always predicts that an ad shouldn’t be shown, and the reason is that 99.99% of the data you have is a no-show (an ad shouldn’t be shown for most queries). So you have to collect more data on ads that should be shown.

- Train model again.

- The model performs well on your existing test data, which is by now two months ago. But it performs poorly on the test data from yesterday. Your model has degraded, so you need to collect more recent data.

- Train model again.

- Deploy model.

- The model seems to be performing well but then the business people come knocking on your door asking why the revenue is decreasing. It turns out the ads are being shown but few people click on them. So you want to change your model to optimize for clickthrough rate instead.

- Start over.

There are many people who will work on an ML project in production -- ML engineers, data scientists, DevOps engineers, subject matter experts (SMEs). They might come from very different backgrounds, with very different languages and tools, and they should all be able to work on the system productively. Cross-functional communication and collaboration are crucial.

🌳 Tip🌳

As a candidate, understanding this production cycle is important. First, it gives you an idea of what work needs to be done to bring a model to the real world and the possible roles available. Second, it helps you avoid ML projects that are bound to fail when the organizations behind them don’t set them up in a way that allows iterative development and cross-functional communication.